Image created by John Tredennick using Midjourney

Image created by John Tredennick using Midjourney

We have approximately 290,000 emails from and to Jeb Bush during his two terms as Florida’s governor in our discovery collection. These are the emails used during the annual TREC conferences put on by NIST. Program organizers reviewed the collection and came up with about 35 topics to test machine learning algorithms (none of which included LLMs back in those days).

Our assignment today is to find information about this topic:

Summer Olympics — All documents concerning a bid to host the Summer Olympic Games in Florida.

The question is this: How quickly can we get a handle on issues relating to this topic including who is involved, what positions did they take and what were the arguments on both sides.

This is not about finding relevant documents for humans to review. Rather, it is about putting large language models like GPT and Claude to work analyzing documents and answering questions. And, they can do in seconds what would take humans hours or days to accomplish. That is the point of our testing.

For these exercises we will be using B2, our internal system created in the Merlin lab to explore how we might best integrate the analytical power of LLMs like GPT and now Claude, in a traditional discovery platform. Other eDiscovery platforms may or may not be able to integrate one or multiple LLMs into the workflow. Our goal is to identify relevant documents in response to a natural language prompt, submit them to GPT for analysis and then review its answers to our questions.

Image: John Tredennick, Merlin Search Technologies

Image: John Tredennick, Merlin Search Technologies

In this case, B2’s job is to:

- Comprehend the initial prompt and identify relevant documents from the database that could aid in responding to it.

- Distill these documents into a summary that aligns with the informational requirements of the initial prompt.

- Condense this information into a form that can be fed into GPT for response. Depending on the complexity and volume of the information, this may necessitate breaking our efforts into a series of prompts and responses.

- Receive GPT’s response, which should be based on both the reviewed information and the content of the original prompt.

- Record this information, and transmit it back to the user.

B2 allows us to choose which LLM we want to use for which purpose. In this case we are using GPT 3.5 Turbo to summarize documents and Claude 2 for document analysis and synthesis.

This is an ongoing project where we will use B2 to investigate different TREC topics. Our purpose is to illustrate the new capabilities an LLM like GPT can offer for investigations and discovery.

Search engines find relevant candidates for review but stop there. Expensive legal professionals have to take over from there to review, analyze and determine whether the found documents are relevant and then to uncover the story they might tell about the topic. Suffice it to say that the process is tedious, time consuming and expensive.

Can B2 do better? Let’s see. Keep an eye on how well B2 performs its tasks through these exercises. We believe you’ll be impressed with its analytical prowess and capabilities.

We start with a simple natural language question to get our investigation started:

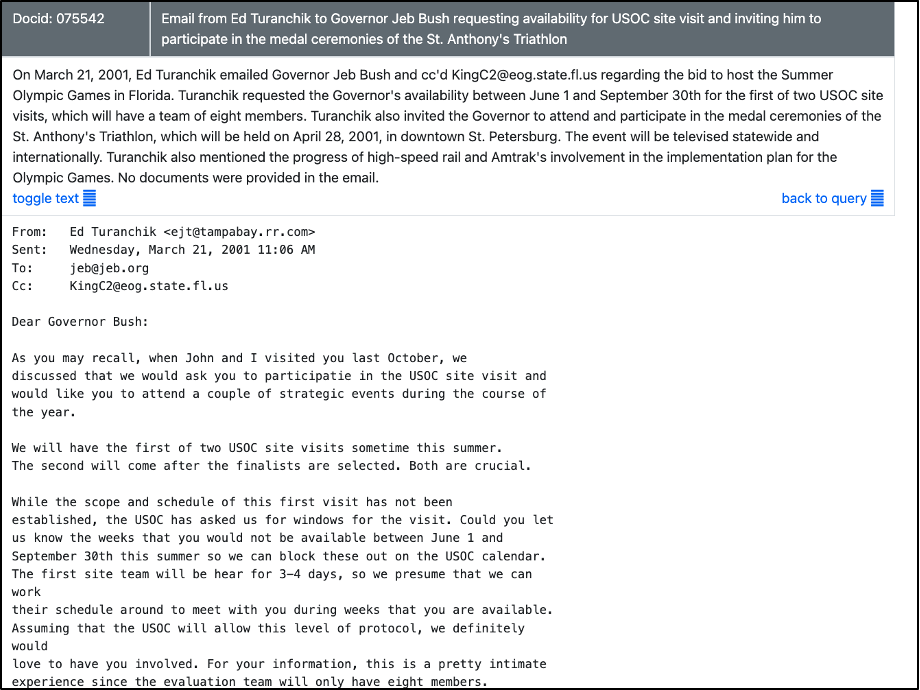

Before preparing the above response, B2 used a vector-based search engine to find 30 of the most relevant documents, summarizing each as it related to the topic being investigated. Here are some sample summaries:

B2 provides a link from the summary to the document itself:

Image: John Tredennick, Merlin Search Technologies

Image: John Tredennick, Merlin Search Technologies

How Long? How Much?

You might be wondering how long it took–from the time we entered our topic–for B2 to retrieve the search results, summarize the documents, and produce the above analysis of key issues. We received the first document summary in around 20 seconds, and the full analysis was complete in under 2 minutes.

How much did this cost us in LLM processing fees? About 30 cents. Compare these times and costs with doing it yourself—or paying someone to do it for you.

Now that doesn’t cover the costs of building our platform or developing our expertise. But, well, you get the point here. This is game changing stuff.

Pros and Cons

Next we will ask B2 for more information about the pros and cons of the issues being discussed.

We will end this exercise by asking B2 to prepare an investigation report on our topic:

That’s the end of today’s exercise.

Conclusion

Welcome to a new era of Discovery–one where we can quickly move from search hits to discovery answers with the extraordinary power of artificial intelligence. By seamlessly integrating an LLM with an algorithmic search engine like Sherlock, legal professionals can harness the immense capabilities of large language models to streamline discovery processes and quickly dive deeper into the key documents that are most relevant for the case.

Up until now, keywords were the primary means to find relevant documents. The search engine’s job was to locate potential candidates that might be responsive to your information needs. Once this step was complete, the search engine’s job ended. The onus then fell on you and your team to read, analyze, and interpret the results, a process that was often tedious, time-consuming, and expensive.

Generative AI systems like GPT and Claude can take discovery beyond simple search. They can analyze result sets and use them to answer questions or otherwise provide meaningful information in response to your prompt. For the first time in history, we have at our fingertips a generative AI system that can assist with the second half of the discovery process–ESI review and analysis.

The applications we have explored in this article merely scratch the surface of an LLM’s potential to assist in investigations and discovery efforts. Using an integrated system like B2 to help in synthesizing information, summarizing documents, answering questions and creating investigation reports, will make investigations and discovery more efficient, improving outcomes and saving time and money.